Teckel is a Scala / Apache Spark reference implementation of the Teckel Specification — a declarative YAML language for defining data transformation pipelines. You describe what you want done; Teckel parses the YAML, builds the DAG, validates references, and executes it on Spark.

Background reading: Big Data with Zero Code.

| Project | Role |

|---|---|

| eff3ct0/teckel-spec | Language-agnostic specification (current: v3.0). Defines syntax, semantics, expression grammar, validation rules, conformance levels, error catalog. |

| eff3ct0/teckel (this repo) | Scala 2.13 + Apache Spark 3.5.x reference implementation. |

| eff3ct0/teckel-rs | Rust implementation (parser + model layer for v3.0). |

- Declarative YAML pipelines — sources, transformations, sinks defined as data, not code.

- 45+ built-in transformations — relational (

select,where,group,order,join,union/intersect/except,distinct,limit), column ops (addColumns,dropColumns,renameColumns,castColumns), analytical (window,pivot,unpivot,flatten,sample,rollup,cube,groupingSets), warehousing (scd2,merge,enrich,schemaEnforce), control flow (conditional,split,sql), v3.0 additions (offset,tail,fillNa,dropNa,replace,parse,asOfJoin,lateralJoin,transpose,describe,crosstab,hint), and pluggablecustomcomponents. - Variable substitution —

${VAR}and${VAR:default}resolved from env vars. - Secrets —

{{secrets.<alias>}}placeholders backed by env vars or pluggable providers. - Hooks —

preExecution/postExecutionshell commands around the pipeline run. - Pipeline config — backend selection, cache policy, notifications, custom component declarations.

- Streaming I/O —

streamingInput/streamingOutputfor Structured Streaming pipelines. - Dry-run & validation — preview the execution plan and catch broken references before Spark starts.

- Doc generation — render any pipeline as Markdown.

- DAG visualization — Mermaid, DOT, or ASCII output.

- Embedded REST server — expose pipelines as HTTP endpoints with no extra dependencies.

Naming note: this implementation uses

group/orderas the YAML keys (instead of the spec-canonicalgroupBy/orderBy). All other top-level keys and operation names match the spec.

version: "3.0"

input:

- name: employees

format: csv

path: 'data/employees.csv'

options:

header: true

inferSchema: true

transformation:

- name: active

where:

from: employees

filter: "status = 'active'"

- name: by_dept

group:

from: active

by: [department]

agg:

- "count(*) as headcount"

- "avg(salary) as avg_salary"

output:

- name: by_dept

format: parquet

mode: overwrite

path: 'data/output/by_dept'More examples under docs/etl/ — simple.yaml, complex.yaml, join.yaml,

window.yaml, merge.yaml, quality.yaml, secrets.yaml, streaming.yaml, and many more.

- JDK 8 or 11 (Spark 3.5.x is not fully supported on Java 17 in all configurations).

- Apache Spark 3.5.x at runtime (declared

providedin the library artifacts). - YAML pipeline files.

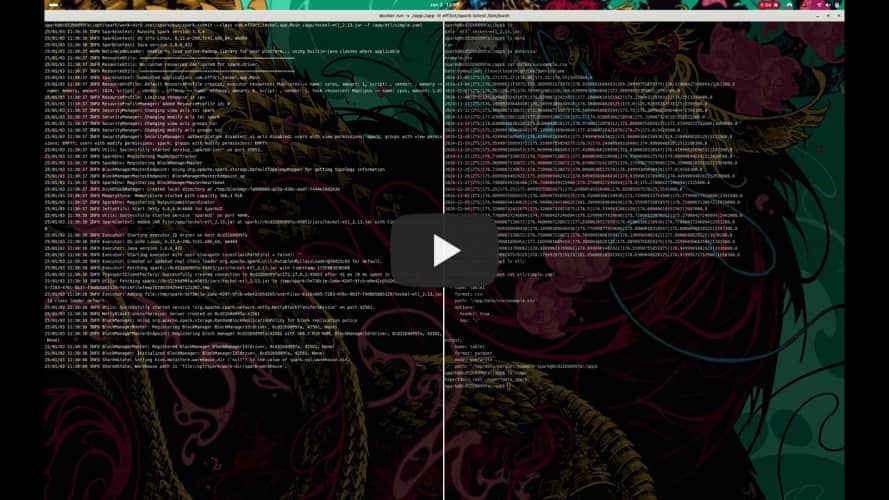

If you don't already run Spark, the

eff3ct/spark image

(eff3ct0/spark-docker) is a ready-to-use cluster.

Clone and build:

git clone https://github.com/eff3ct0/teckel.git

cd teckel

sbt cli/assemblyThe CLI uber JAR is cli/target/scala-2.13/teckel-etl_2.13.jar (Spark bundled in).

-f, --file <path> run the pipeline at <path>

-c, --console read YAML from stdin

--dry-run print the execution plan, no Spark

--doc emit Markdown documentation for the pipeline

--graph emit the DAG (Mermaid by default)

--server [--port N] start the embedded REST server

/opt/spark/bin/spark-submit --class com.eff3ct.teckel.app.Main teckel-etl_2.13.jar -f /path/to/pipeline.yamlcat << 'EOF' | /opt/spark/bin/spark-submit --class com.eff3ct.teckel.app.Main teckel-etl_2.13.jar -c

version: "3.0"

input:

- name: t

format: csv

path: '/path/to/data/file.csv'

options:

header: true

sep: '|'

output:

- name: t

format: parquet

mode: overwrite

path: '/path/to/output/'

EOFImportant

Teckel CLI as dependency / Teckel ETL as framework.

The Teckel CLI is also usable as a library dependency. The uber JAR is named teckel-etl

(not teckel-cli) precisely to distinguish the CLI from the framework when both end up on the

same classpath.

See Integration with Apache Spark for embedding details.

Teckel integrates with any existing Spark application — either as a CLI invoked via

spark-submit, or as a library (teckel-api) embedded in your Scala code. Three entry points

are exposed: etl[F, O] (polymorphic), etlIO[O] (fixes F = IO), and unsafeETL[O]

(synchronous). See Integration with Apache Spark.

The Docusaurus site under teckel-docs/ contains the full guide:

Getting Started, Transformations, API, CLI, Plugins, and Examples. The canonical language

reference lives in

teckel-spec v3.0.

Contributions welcome — fork the repository and open a pull request. For changes affecting the YAML surface, please cross-check against the Teckel Specification before submitting.

Teckel is available under the MIT License. See the LICENSE file for details.

For any issues or questions, open an issue on GitHub or contact Rafael Fernandez.